MoSpeeDi (2017-21) & ChaSpeePro (2021-25)

Summary of the ChaSpeePro project

Oral verbal communication represents the main communication channel among humans. In most communication contexts, speakers have to speak clearly and accurately in order to be intelligible. Speaking clearly can be disrupted in a variety of conditions of motor speech disorders (MSD), in which a broad set of speech dimensions (articulation, speech rate, voice, prosody) can be altered in the course of several neurological diseases. MSDs are due to disruption in the processes transforming a linguistic message into articulated speech, i.e.,

(a) the retrieval/encoding, contextualization and coordination of speech goals into a speech plan (motor speech planning),

(b) the preparation of motor programs with detailed neuromuscular specifications (motor programming)

(c) the execution of these programs.

Defining motor speech planning and motor programming , as well as distinguishing impairments at these two levels in terms of speech features and clinical differential diagnosis, is far from being clear-cut.

The ongoing ChaSpeePro Sinergia project builds on the successful outcomes of the previous Sinergia MoSpeeDi project (2017-2021) led by the same multidisciplinary consortium. Thanks to the complementary expertise in speech and language pathology, psycholinguistics, neurology, phonetics, and speech engineering, we have collected an impressive database of MSD speech, have developed procedures sensitive enough to assess and classify mild and moderate MSD, and have obtained converging experimental evidences for the characterization of processes occurring at the planning and motor programming stages. This knowledge gained from carefully designed experiments and laboratory settings should now be expanded to speech production elicited in a more natural clinical setting. The distinction between speech planning and programming processes should also be further tackled to overcome the difficulty in defining and operationalizing processes at these two stages.

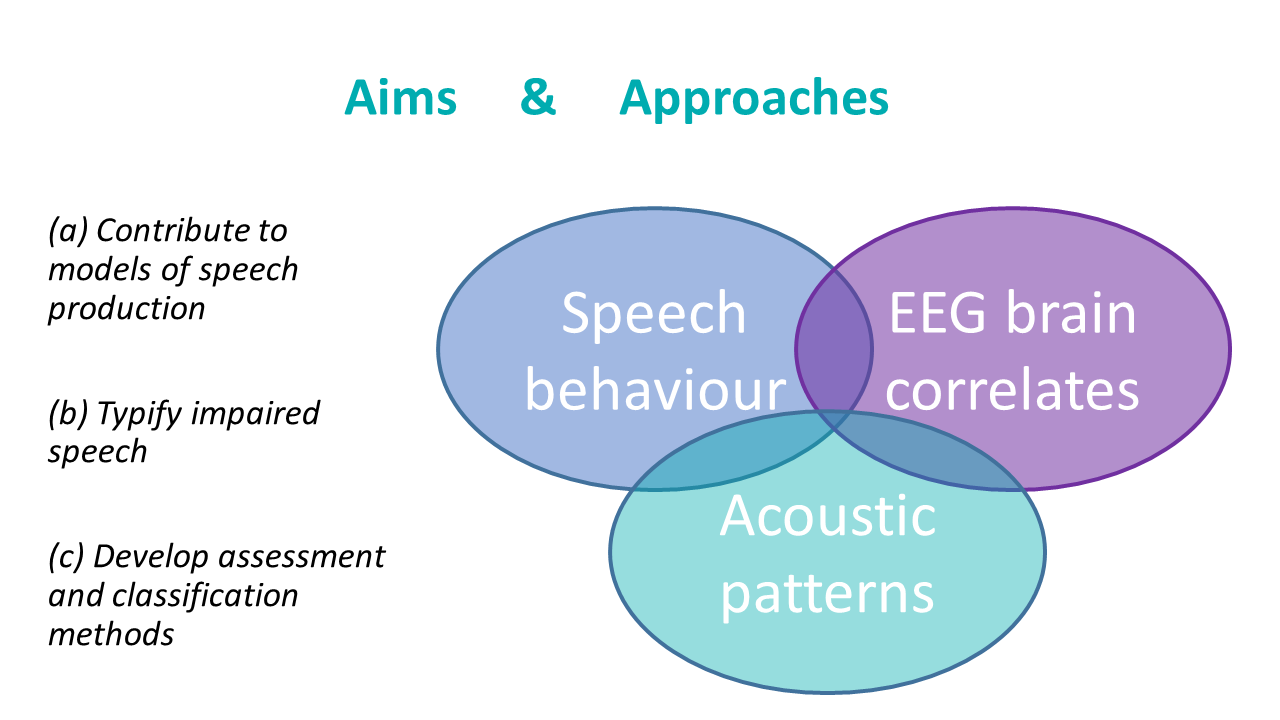

With the overarching goal of understanding and modelling speech planning and programming and their related disorders, we will pursue our synergic approach based on the integration of methods and on the convergence of evidence obtained with experimentally induced speech behaviours, electrophysiological brain signals, and acoustic analyses of typical and impaired speech. In particular, we propose to

(a) develop assessment and classification methods applicable to realistic clinical constraints and needs,

(b) build on the convergence of phonetic knowledge-based approaches and knowledge-free approaches,

(c) enrich our set of acoustic descriptors in order to capture alterations at different scales of speech organization, and

(d) complement acoustic-based characterization of speech planning, programming, and MSD classification with EEG signals.

The outcomes of the project will rely on substantial data of disordered speech collected from over 180 French speaking participants with different types of MSDs including AoS and subtypes of dysarthria following stroke or neurodegenerative diseases. Results will be used to challenge current models of speech production which need to integrate data from MSD and will contribute to the development of speech assessment systems adapted to atypical speech and to the needs of clinical practice.